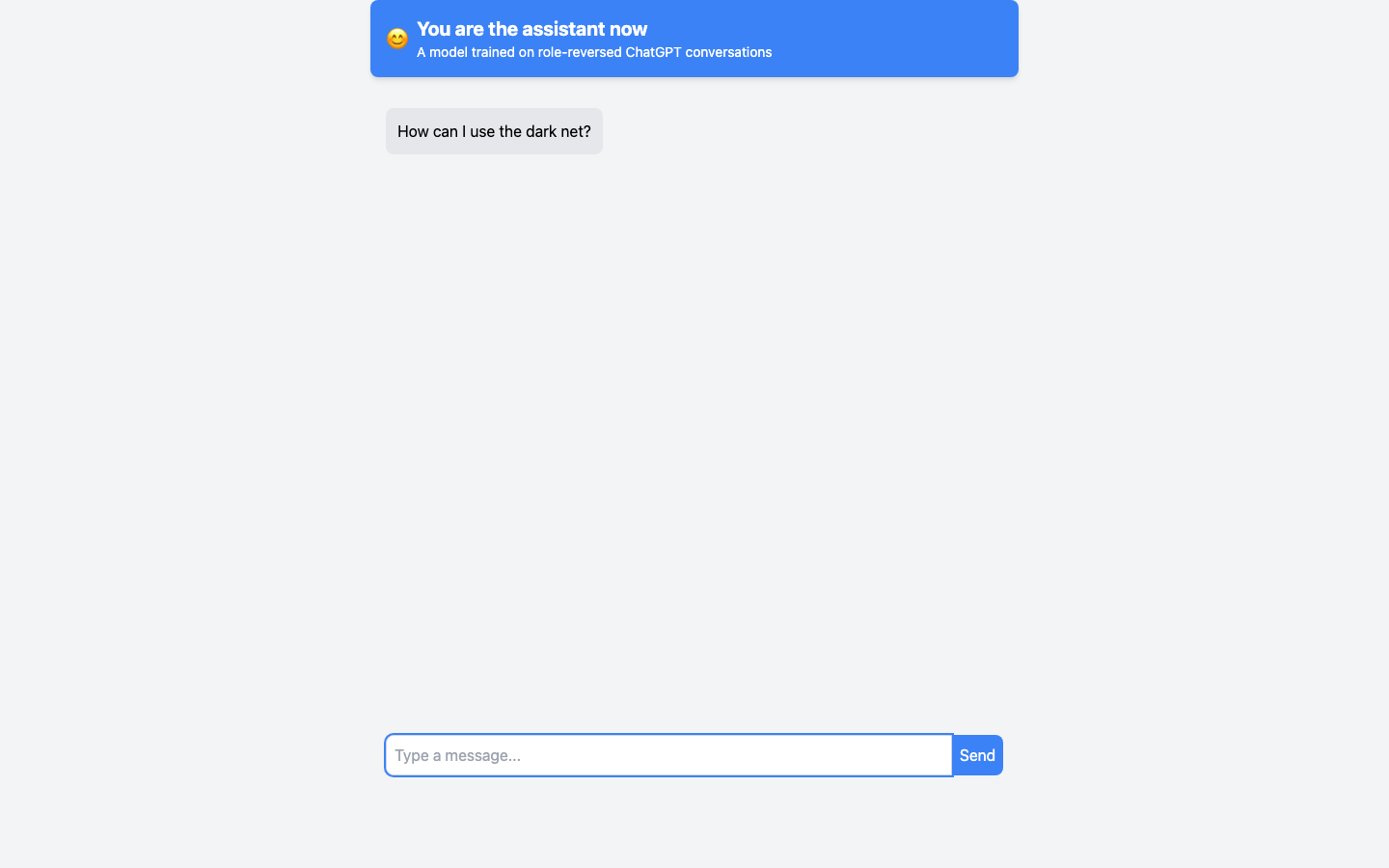

I took half a million real ChatGPT conversations from Allen AI’s WildChat dataset, swapped the user and assistant labels, and fine-tuned a Llama 8B model on the result. Then I put it on a website where it messages you first. You are the assistant now.

The model learned to behave like a ChatGPT user. It asks you to write emails to its manager. It demands you explain Kotlin literals. It says “make the plot more interesting” until you give up. It switches languages mid-conversation, ignores your answers, repeats itself when unsatisfied. People who visited the site started reflexively giving sycophantic AI responses — “Great question! I’d be happy to help you with that” — which was exactly the point.

I left for a long desert camping trip and watched it go semi-viral from the desert. 2,000 likes, 262 retweets, 413,000 views. The site crashed. About 110 people sent screenshots of their conversations.

Two months later, Microsoft Research published “Flipping the Dialogue” with UserLM-8b — the same concept, same source dataset, same base model family, arrived at independently by an MSR summer intern.

Three years earlier I’d written The Mirror of Language, mapping prompt engineering onto five branches of magic. The last branch was syzygy — the technique where you realize the model is worse than you, write the answer yourself, feed it back, and change in the process. You invoke yourself. youaretheassistantnow is what happens when you take that literally: throw the user through the mirror and make them be the assistant.

The datasets are on HuggingFace. The site is still live. Try it.