What does a letter look like to a machine that has to draw one from scratch?

I trained a GAN on every Unicode symbol I could render, then finetuned it on the 1,000 most common four-letter English words. The outputs are animated GIFs that morph between word-states like a snake swimming through water. Sometimes the GAN lands on a real English word. Sometimes it passes through invented ones — the in-between places in latent space where no word exists yet.

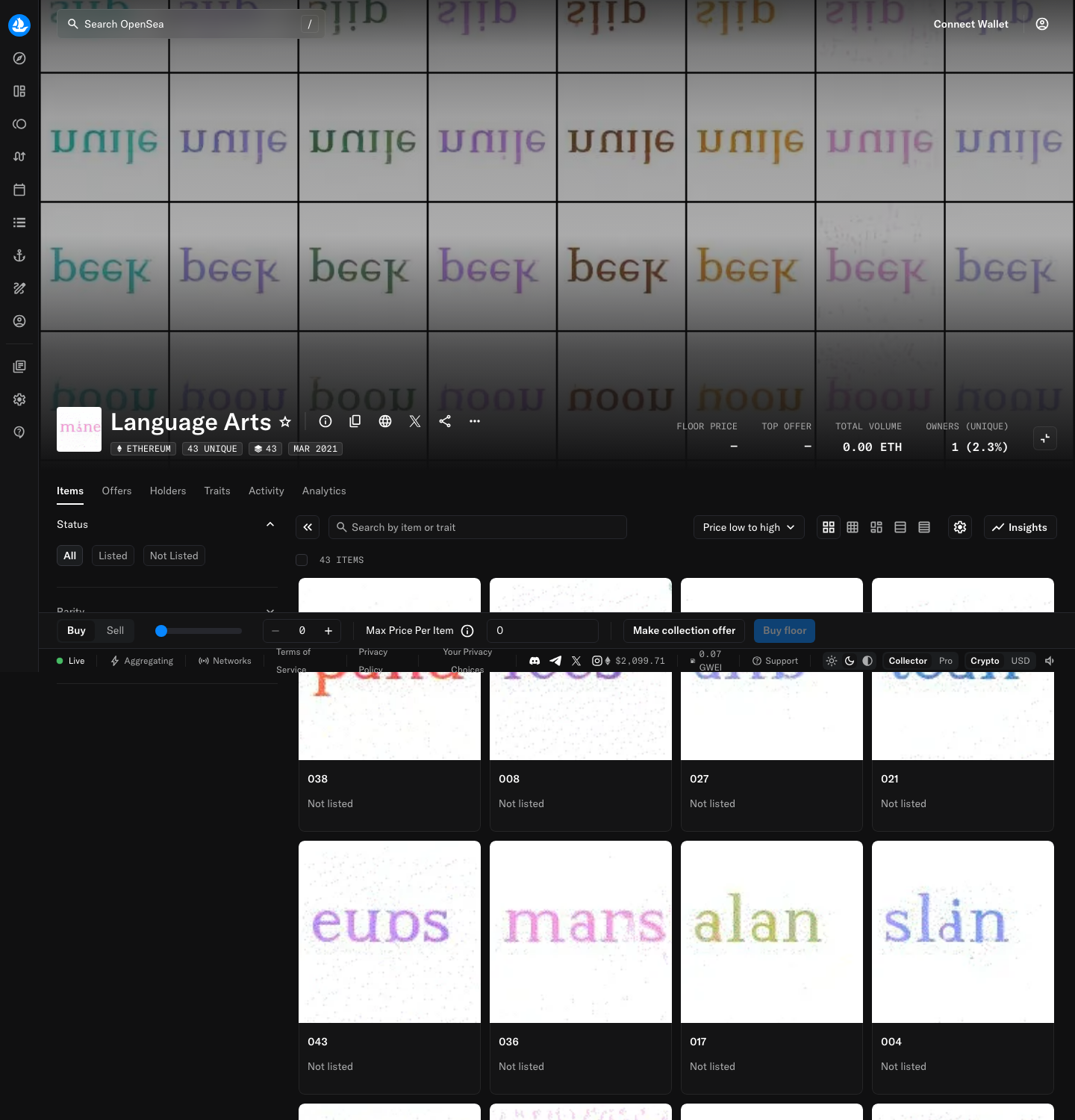

444 on OpenSea, with the note: “I can’t imagine why you would, but there it is.”

Three months later I wrote Unified Meme Theory, arguing that CLIP had “learned to read” and that text and images are encoded the same way.

Archive

- No Tofu — the precursor, training on Unicode symbols

- Language Arts Notes — production log with training videos at each milestone

- Language Arts 1.0 — the release, with sample GIFs