Cantrip’s intellectual lineage: an annotated bibliography

I wrote Cantrip as a synthesis of several conversations I’d been following — and participating in — for years. Read together, the sources here show those conversations converging: recursive and code-based models of agency; RL-style thinking about trajectories, replay, and environments; Unix-like compositional environments; the recent emergence of harness engineering, agent-computer interface design, and agent experience; and the containment-oriented naming and invocation practices that give Cantrip its vocabulary.

These sources don’t all belong to one conscious school, and they didn’t all shape Cantrip in a simple linear way. Some are direct architectural precursors. Some are symbolic neighbors. Some are later formalizations. Some are independent convergences. The aim here is not to collapse those distinctions but to make them navigable.

Architectural shift: from chat to medium

deepfates. “Recursive language models.”

Where I articulate the shift from chat to programmable environment most directly. Agents are not chatbots with tools but something closer to “programming languages come alive,” with state and action relocated into the environment.

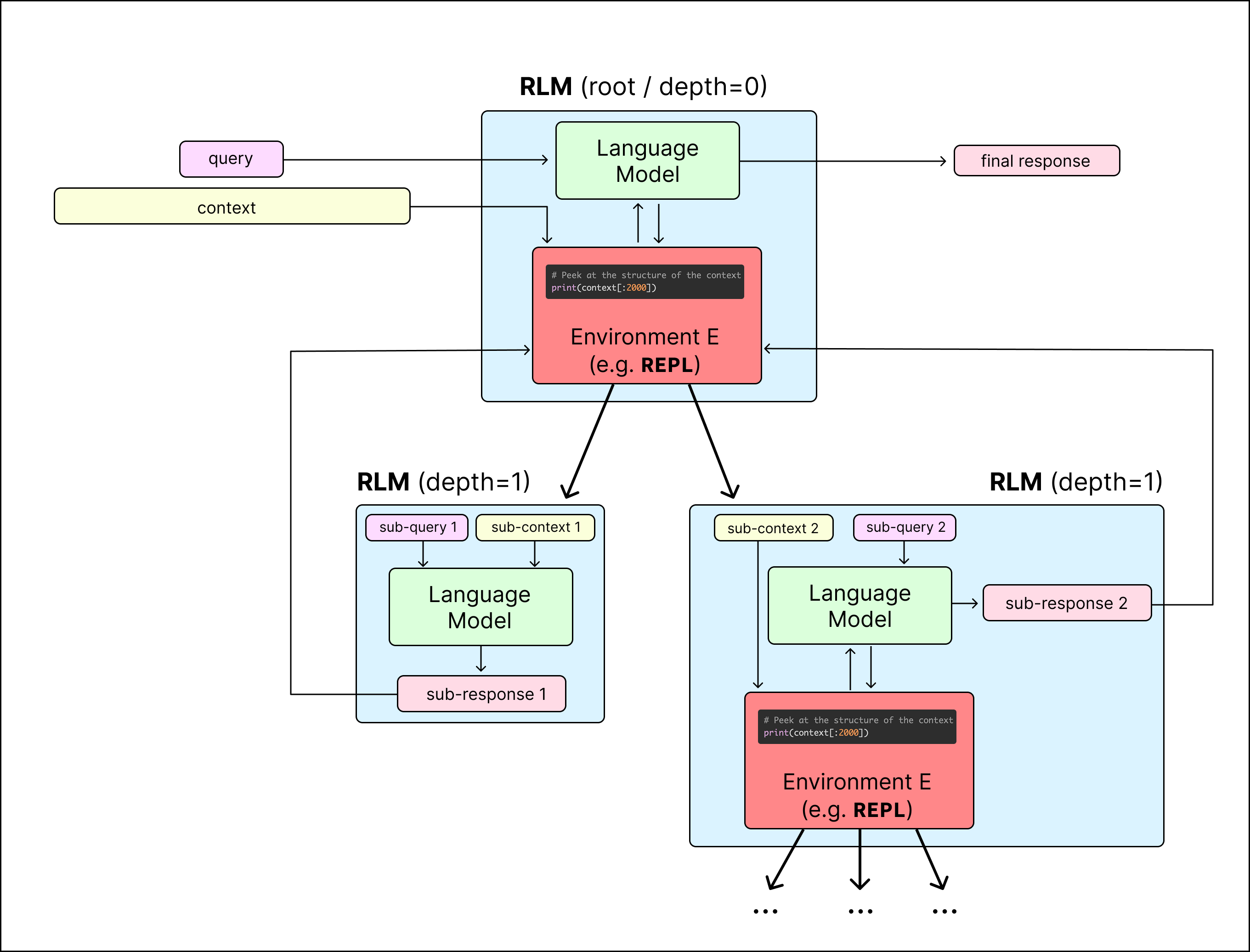

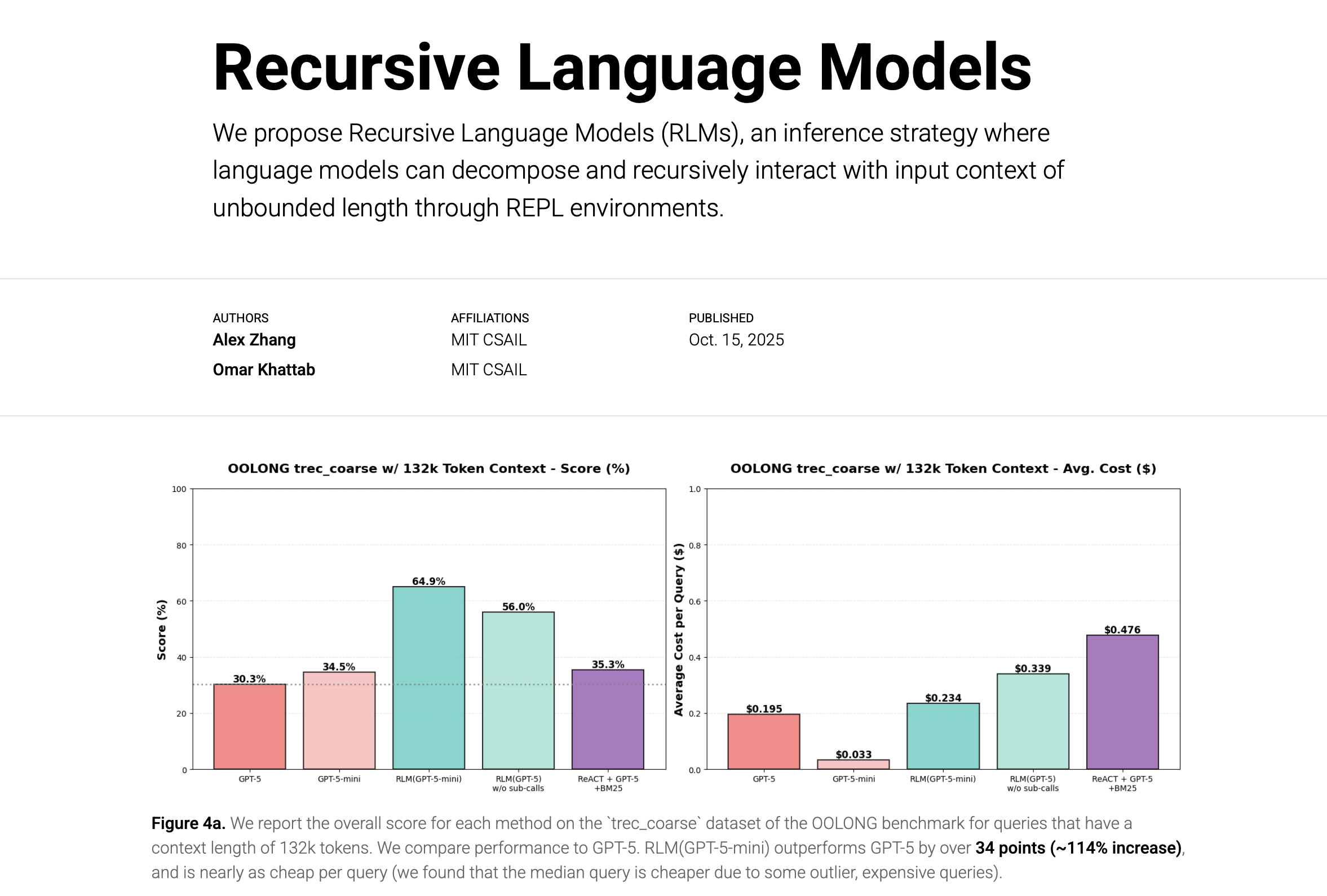

Zhang, Alex, et al. “Recursive Language Models.”

The academic formalization of the same move. RLMs let language models interact recursively with unbounded context through a REPL environment rather than consuming it all as prompt.

Zhang, Alex. “RLM vs Agents.”

Fundamentally, what really is the difference between an RLM and S={context folding, Codex, Claude Code, Terminus, agents, etc.}?

— alex zhang (@a1zhang) January 22, 2026

This is the last and most important RLM post I'll make for a while to finally answer all the "this is trivially obvious" from HackerNews, Reddit, X,…

Sharpens a distinction that matters here: in an RLM, recursion is embedded in the symbolic medium itself rather than delegated to an external orchestration layer. Explains why I put Cantrip’s composition model inside the medium.

Cheng, Ellie Y., et al. “Enabling RLM Inference with Shared Program State.”

A formalization of the shared-state variant. Shows how natural-language code can read and write host-program state, which maps closely to how my gates work: host functions projected into the medium as native callables, so the entity writes read("data.json") in code without knowing it’s crossing the circle’s boundary.

Wang, Xingyao, et al. “Executable Code Actions Elicit Better LLM Agents.”

The empirical case for code as action space. Executable code gives agents a more expressive and composable action surface than pre-specified JSON or text tool calls — a direct validation of the code-circle idea.

Varda, Kenton, and Sunil Pai. “Code Mode: the better way to use MCP.”

A production restatement of the same principle. Tools work better when presented to agents as a code-facing API rather than a huge menu of tool schemas.

Zechner, Mario. “What I learned building an opinionated and minimal coding agent.”

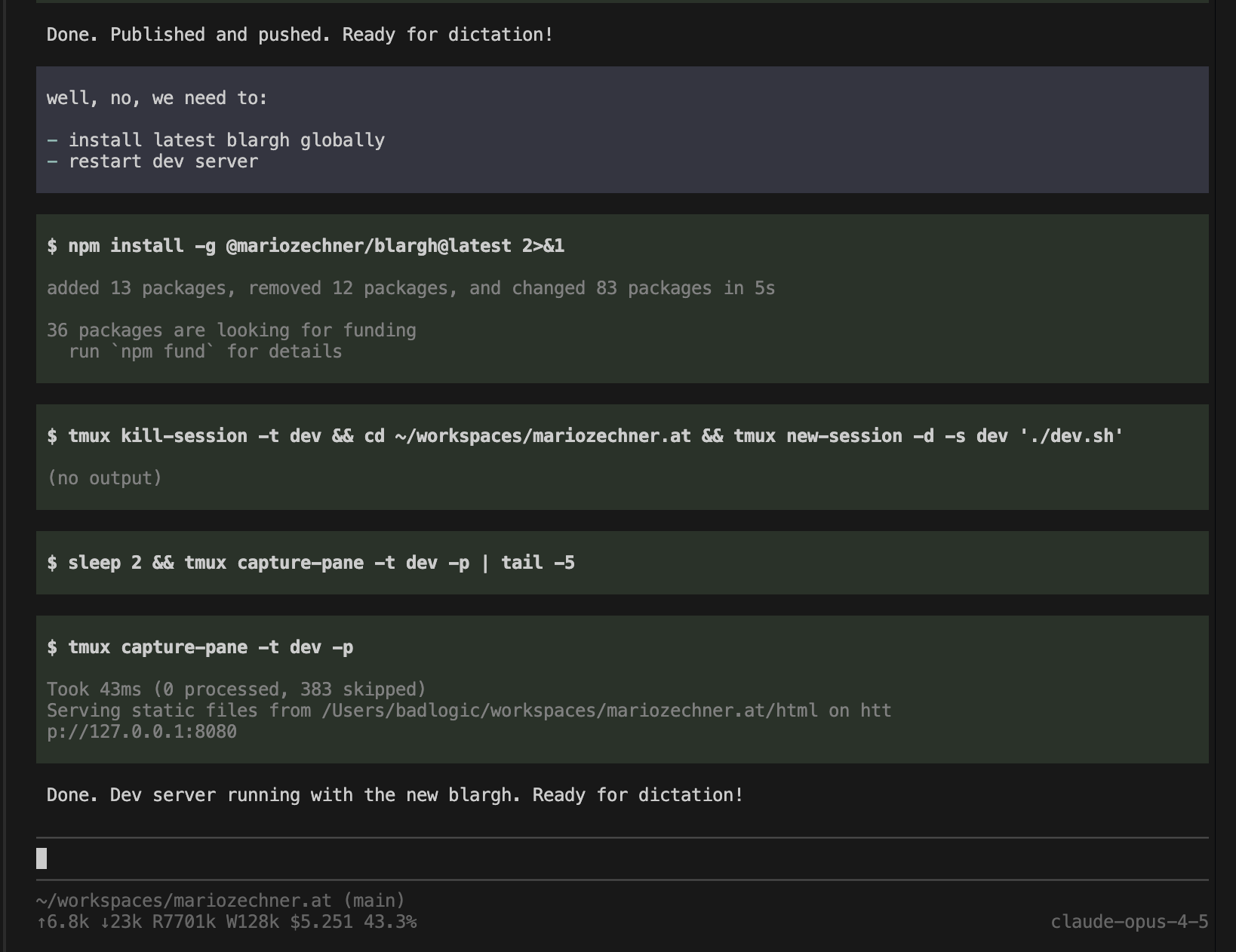

A good small-system example. Shows the shell-first, low-token, minimal-tool, context-disciplined style of coding agent that makes the broader architectural claims feel concrete.

Weitekamp, Raymond A. “ypi: a recursive coding agent.”

A working recursive example. Demonstrates shell-based recursive agent composition rather than describing the idea in abstract terms.

Loom / RL / learning

deepfates. “Cantrip: On summoning entities from language in circles.”

The primary text. I define an agent as an LLM acting in a loop with an environment (the circle). The Loom — the append-only tree of every turn across every run — is simultaneously the debugging trace, the training data, and the replay buffer. I map this vocabulary explicitly onto RL: the circle is the environment, the entity’s code is the action, gate results are observations, threads are trajectories, and the Loom’s branching structure provides exactly the comparative data that methods like GRPO need.

Moire. “Loom: interface to the multiverse.”

The origin point for the Loom. Moire presents it as an interface for generating, navigating, and visualizing “natural language multiverses” — a branching tree of possible continuations, not a log or memory store. In Cantrip, I take the Loom and make it the durable record of every turn an entity takes across every run: the structure that persists after the entity is gone.

cyborgism.wiki. “Loom” and “Pyloom.”

https://cyborgism.wiki/hypha/loom https://cyborgism.wiki/hypha/pyloom

The native-context definition and original software implementation lineage around Loom. Useful together because they preserve both the conceptual meaning (an interface to probabilistic generative models for navigating multiverses) and the concrete interface practice, including the Janus/Morpheus origin story, outside later formalizations.

Epoch AI. “An FAQ on Reinforcement Learning Environments.”

Describes how practitioners actually think about RL environments, tasks, and graders. Keeps my RL reading honest and tied to contemporary usage rather than free association.

Zhang, Jenny, et al. “Darwin Gödel Machine: Open-Ended Evolution of Self-Improving Agents.”

Important for the archive/tree/branching side of the Loom. Its archive of generated agents and open-ended tree of self-improving trajectories is a close formal neighbor to the Loom as branching training substrate.

Shao, Zhihong, et al. “DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models.”

Here for one reason: it’s the canonical source for GRPO. The Loom reads more naturally as a comparative structure once you have a concrete reference for relative optimization across grouped rollouts.

Sutton, Richard. “The Bitter Lesson.”

Not a direct ancestor of my terminology, but a pressure source on the whole formation. Supports the subtractive, capability-first instinct and warns against overdesigned abstractions that hard-code too much human structure.

Amp. “Feedback Loopable.”

A compact articulation of how environment design and fast feedback turn brittle prompting into something adaptive. Treats the loop itself as the load-bearing object, connecting operational practice to RL-flavored intuitions.

Computing substrate

Kay, Alan, and Adele Goldberg. “Personal Dynamic Media.”

The oldest substrate for the word “medium” in this bibliography. Not a direct precursor to agents, but it roots my insistence that computation is a medium for action and thought in a much older computing tradition.

Raymond, Eric S. The Art of Unix Programming.

The Unix worldview: composition over monoliths, text and inspectability over opaque abstraction, small tools over giant frameworks. So much of the current shell-first agent formation reads as a return of Unix values under LLM conditions.

Miller, Mark S., Ka-Ping Yee, and Jonathan Shapiro. “Capability Myths Demolished.”

A retrospective substrate for wards and structural containment. Explains why restricting ambient authority and passing explicit capabilities is a computing pattern, not a fantasy metaphor.

Emmerling, Jakob. “FUSE is All You Need – Giving agents access to anything via filesystems.”

Here for the filesystem-as-medium idea. Many agent interfaces collapse into a filesystem abstraction — a Unix-native expression of the medium concept.

Operational convergence

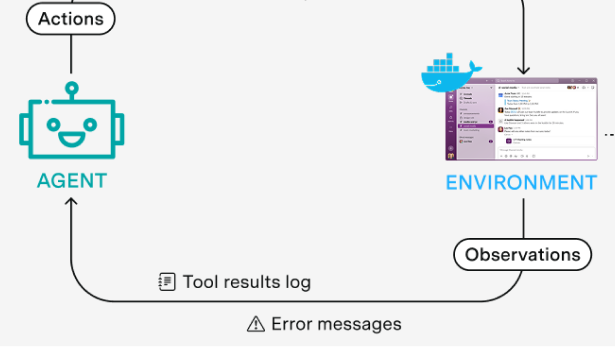

Anthropic. “Building Effective AI Agents.”

A clear industry expression of the thin-harness, simple-patterns, compositional view. Doesn’t share my vocabulary but independently converges on many of the same operational assumptions.

Anthropic. “Effective harnesses for long-running agents.”

Focused on continuity across context windows. Makes artifact handoff, persistence, and long-horizon coordination explicit rather than treating them as implementation details.

OpenAI. “Harness engineering: leveraging Codex in an agent-first world.”

A direct statement that the harness is becoming a first-class engineering discipline. “The loop around the model” is now the main work.

OpenAI. “Unrolling the Codex agent loop.”

A lab-side decomposition of the core coding-agent loop into repeated inference and tool use. Makes loop structure, compaction, and iteration visible in plain operational terms.

OpenAI. “Shell + Skills + Compaction: Tips for long-running agents that do real work.”

A specific source for the shell/skills/compaction stack. Shows that reusable skill bundles and explicit compaction are a recognizable design pattern, not just folk practice.

Cursor. “Towards self-driving codebases.”

A large-scale example of the multi-agent, long-running, repository-centered version of this formation. Shows these ideas aren’t confined to toy agents or essays.

StrongDM. “The StrongDM Software Factory: Building Software with AI.”

The “spec persists, implementation regenerates” idea given industrial form. An example of the ghost-library pattern — specification as the durable artifact, implementation code as ephemeral and regenerable — that runs through the broader formation.

Biilmann, Mathias. “Introducing AX: Why Agent Experience Matters.”

The main source for AX as a product and platform problem. Moves the frame from building agents to designing software ecosystems that agents can inhabit well.

Snyder, Josh Bleecher. “AX: Agent Experience.”

Shows AX isn’t just Biilmann’s coinage but part of a broader design discourse. Grounds agent-facing design in concrete concerns: prompts, tools, handoffs, interaction timing.

Yang, John, et al. “SWE-agent: Agent-Computer Interfaces Enable Automated Software Engineering.”

A bridge between interface design and later AX work. Argues that language models are a new kind of end user, and that interface quality materially affects agent performance.

Breunig, Drew. “How to Fix Your Context.”

A practical counterweight to the more architectural pieces. Makes context management a central engineering problem in its own right.

Breunig, Drew. “A Software Library with No Code.”

An independent articulation of the ghost-library form. Makes “specs and tests as the shipped product” legible outside my world.

Fly.io. “Unfortunately, Sprites Now Speak MCP.”

Less about MCP specifically than the broader point: agent infrastructure is being shaped around durable process habitats, discoverable APIs, and small composable interfaces.

Zunic, Gregor. “The Bitter Lesson of Agent Frameworks.”

A modern restatement of Sutton in agent terms. Captures the backlash against overly abstracted agent middleware and defends the loop as the real unit.

Terminus.

A terminal-native benchmark. Treats persistent terminal control via tmux as the neutral interface for evaluating agent capability, rather than assuming a bespoke tool menu.

Symbolic / worldview

deepfates. “AI as planar binding.”

My seed text for the circle/ward/containment vocabulary. The central move: AI interfaces require structural containment, not just better instructions. That commitment carries directly into Cantrip.

deepfates. “The mirror of language.”

Where I develop the simulator-style framing, the magical taxonomy of prompting, and the idea that precise naming is itself part of practice. Also explains why terms like “crystal” (the model itself, named for what it does rather than what it is) are operative, not ornamental.

Prompt / program lineage

Moire. “Methods of prompt programming.”

An early and explicit treatment of promptcraft as procedural method rather than ad hoc prose. Connects the Janus/Moire lineage to later skill systems and program-like prompt design.

Khattab, Omar, et al. DSPy.

DSPy treats prompts as modules with typed signatures, optimizes them against metrics rather than hand-tuning them, and composes them into pipelines — the same move from craft to engineering that Cantrip makes for agent loops. Where Cantrip says “the cantrip is a value, not a running process,” DSPy says “the prompt is a program, not a string.”