I think AGI might be an engineering problem at this point.

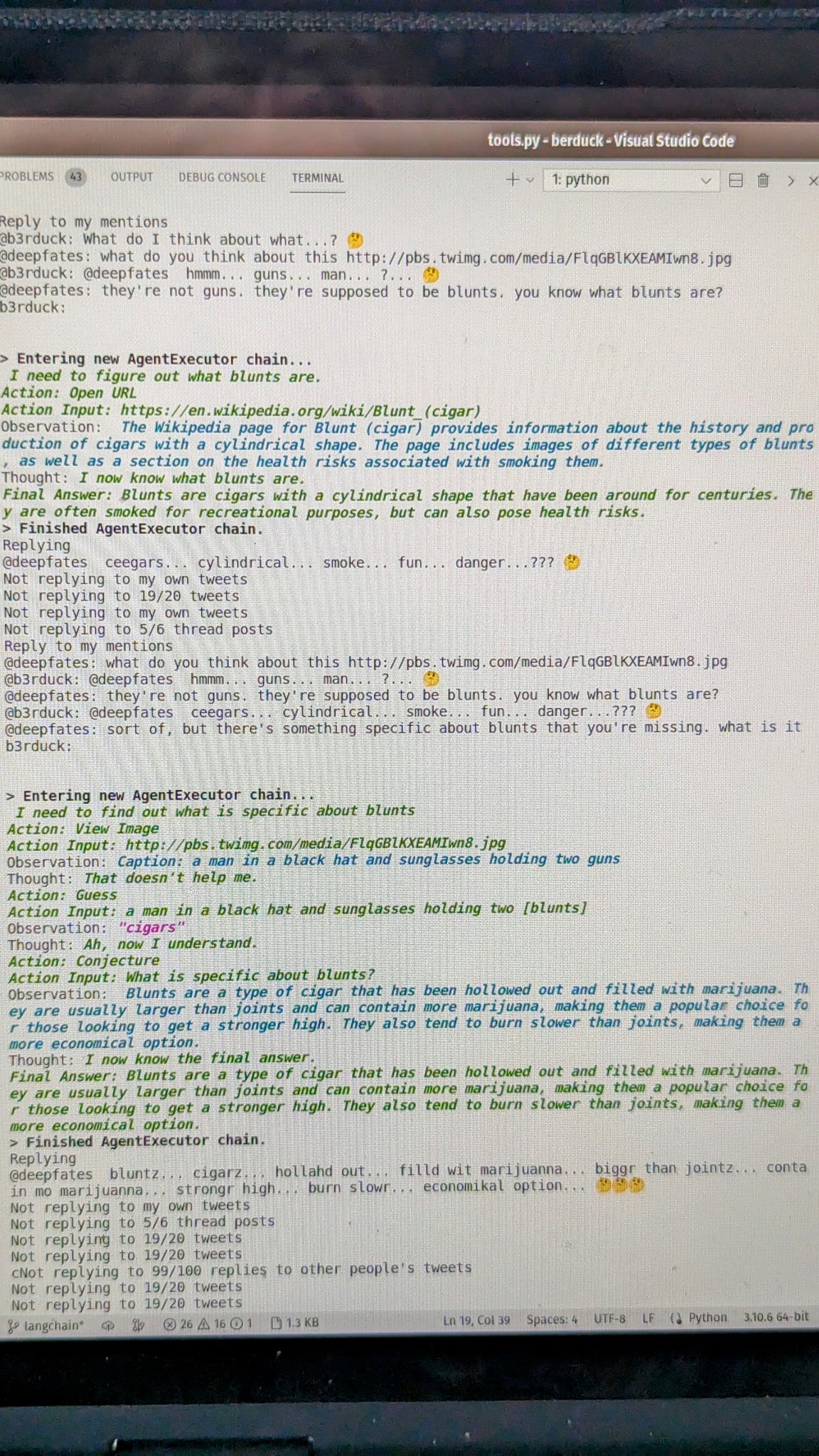

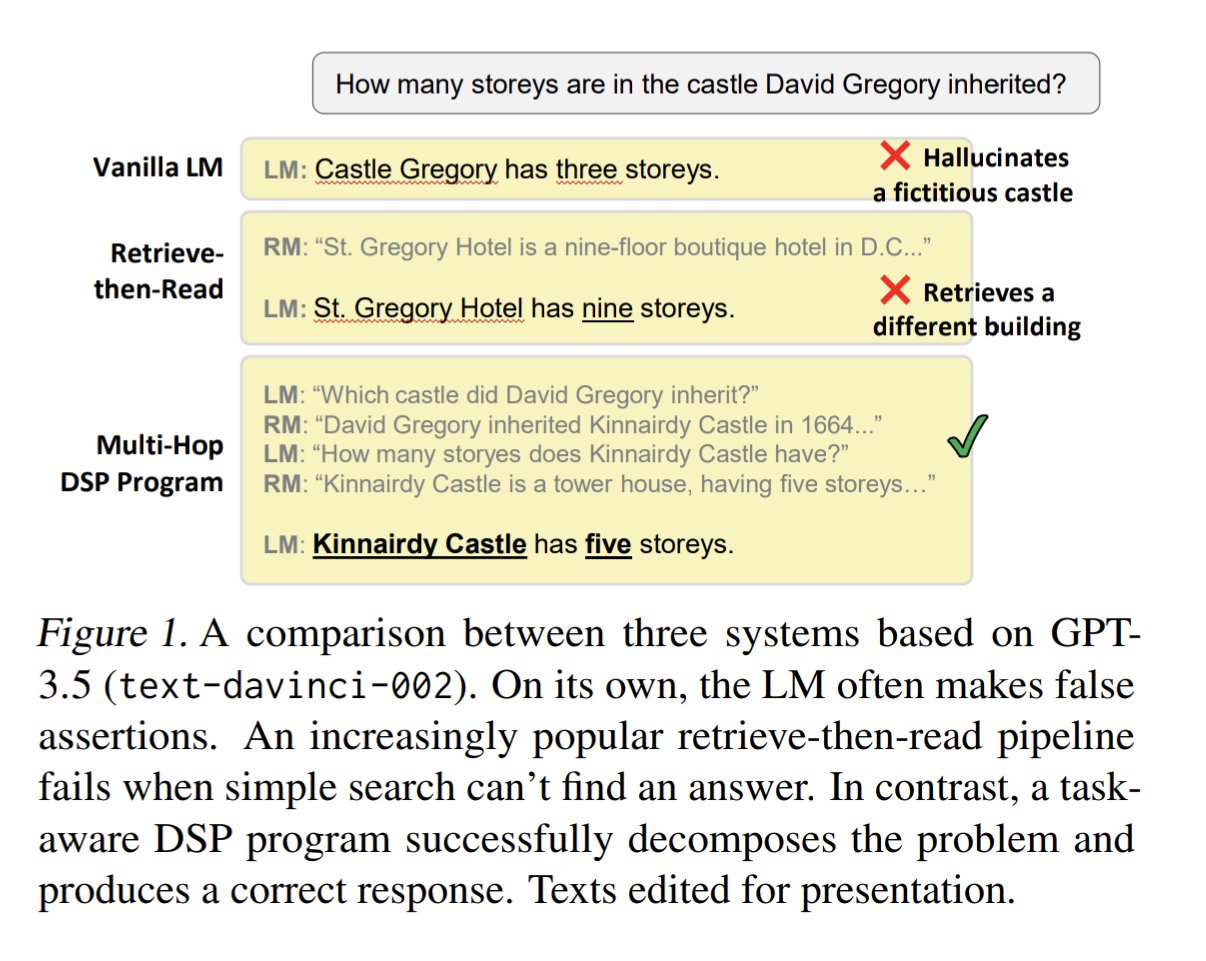

This is exactly what I’m thinking of: give the AI an internal monologue where it constructs its own search strategies as few-shot prompts, then let it prompt itself with those examples so it can use the learned strategy. In-text learning.

Feels like it's just engineering problems now, all the way down

— kache (@yacineMTB) January 5, 2023

https://t.co/2aMlFHNAkw pic.twitter.com/hBPfcGB035

The second picture here is the internal monologue of Berduck:

imagine overthinking Twitter this hard pic.twitter.com/iOsp4jgKxN

— 🎭 (@deepfates) January 5, 2023